set_downstream (setup_task )ĭef is_midnight (logical_date ) : return logical_date. Python_callable =lambda prev_start_date_success : prev_start_date_success is None ,īash_command = 'python /opt/airflow/src/setup.py', ) Python_callable =lambda prev_start_date_success : prev_start_date_success is not None , 2/constraints-3 Apache Atlas is designed to effectively exchange metadata within Hadoop and the broader data ecosystem Before. datetime ( 2022, 5, 1, tz = 'UTC' ) ,ĭagrun_timeout = timedelta (minutes = 1 ) ,

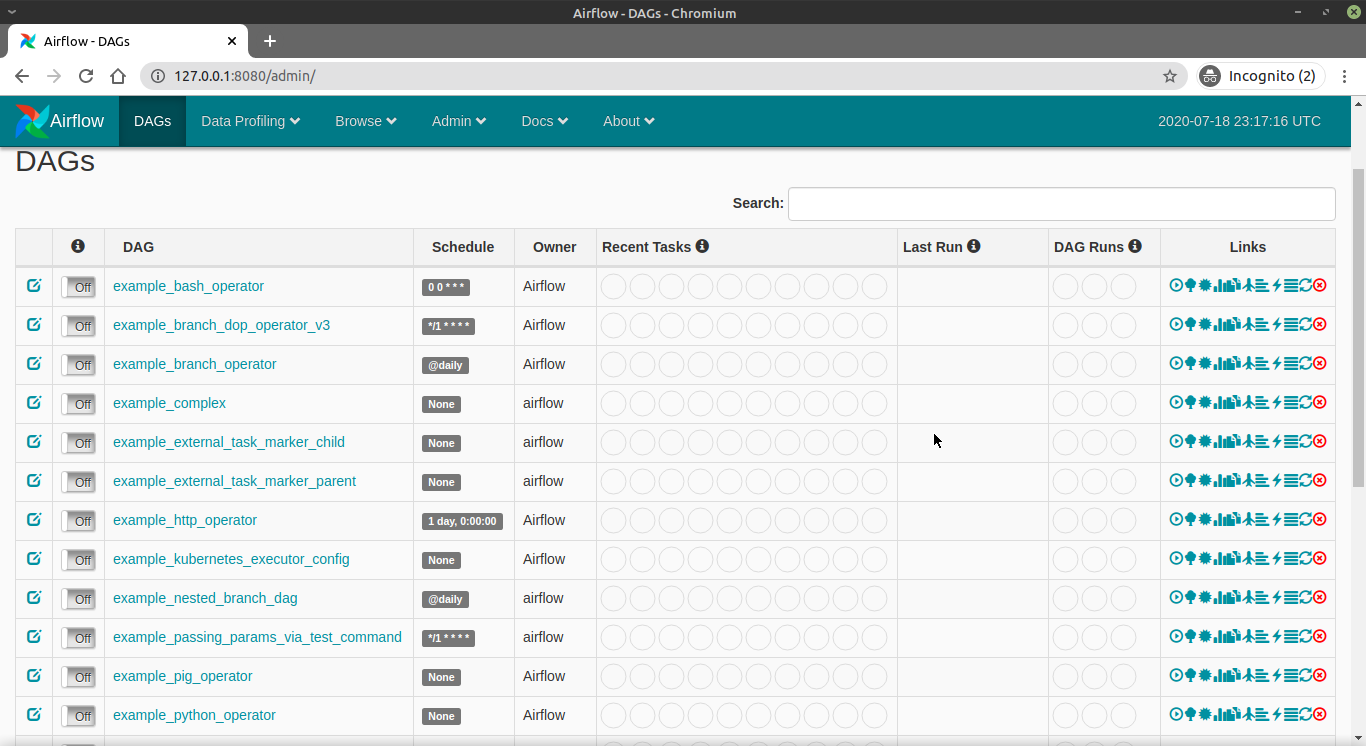

We can configure several actions that would be triggered if something goes wrong in the CI/CD pipeline.JOB_STATUS_DONE = 'done' HTTP_NO_CONTENT = 204 class Client :ĭef _init_ (self, username, password ) : This step is optional, but it’s useful to be notified if something goes wrong in the deployment process. Cloudera Data Engineering (CDE) enables you to automate a workflow or data pipeline using Apache Airflow Python DAG files. Introduction to Apache Airflow : v0.1: 2: Set up airflow environment with docker : v0.For instance, we may want to exclude unit test ( test*) and documentation markdown files (. Optionally, we can specify to ignore specific file types. For this demo, we want that our DAG files will be deployed to the folder dags as shown below:īy using the same action, go to the right to the “Options” tab to configure the remote path on S3:Īfter selecting the proper S3 path, we can test and save the action. Configure additional “Options” to ensure that the correct file types will be uploaded to the correct S3 subfolder.Airflow provides a method to view and create. For now, we choose the action “Transfer files to Amazon S3 bucket”, and configure that any changes to Python files from the Git folder dags should trigger a deployment to the S3 bucket of our choice. It allows you to perform as well as automate simple to complex processes that are written in Python and SQL.

For this demo, we only need a process to upload code to the S3 bucket, but you could choose from a variety of actions to include the additional unit and integration tests and many more. Here we can add all build stages for our deployment process. So in our sample data pipeline example using airflow, we will build a data cleaning pipeline using Apache Airflow that will define and control the workflows involved in the data cleaning process. For this demo, we want the code to be deployed to S3 on each push to the dev branch. Apache Airflow Tutorial for Beginners As a data engineer, youll be frequently tasked with cleaning up messy data before processing and analyzing it. Configure when the pipeline should be triggered. operators.python import PythonOperator from datetime import datetime, timedelta import argparse import psycopg2 import csv import os import sys from datetime import datetime from google.cloud import bigquery from google.oauth2 import serviceaccount def postgresqldatabaseconnection(tablename, datafile). Apache Airflow has become the dominant and ubiquitous Big Data workflow management system, leaving Oozie and other competitors miles behind in terms of.But the upcoming Airflow 2.0 is going to be a bigger thing as it implements many new features. You will learn about the core concepts in. Apache Airflow is already a commonly used tool for scheduling data pipelines. Key Term: A TFX pipeline is a Directed Acyclic Graph, or 'DAG'. Speaker: Varya Karpenko Track:P圜onDE This talk gives an introduction to Apache Airflow, that facilitates workflow automation and scheduling. In each approach, one can use one of three types of executors. In this blog, we explain three different ways to set it up. It runs locally, and shows integration with TFX and TensorBoard as well as interaction with TFX in Jupyter notebooks. There are multiple ways to set up and run Apache Airflow on one’s laptop. For instance, you could have one pipeline for deployment to development (dev), one for user-acceptance-test (uat), and one for the production (prod) environment. Introduction This tutorial is designed to introduce TensorFlow Extended (TFX) and help you learn to create your own machine learning pipelines. This shows that you can have several pipelines within the same project. Create a new project and choose your Git hosting provider.If you want to try it, the free layer allows up to five projects. To build a CD process in just five minutes, we will use Buddy.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed